The UI's and the way they're set up to work from a workflow perspective is quite different, but I feel like behind the scenes the backup mechanism is probably similar? In my case I also kept the number of data and parity shards outside of the packets, but that is also less flexible.ĭespite any critiques I may bring up, I really like the idea of being able to include parity to provide extra protection in places where the storage or transport might not always be reliable.Many people seem to use Duplicacy, but it's quite hard to find info about Kopia. Granted, that’s a different use case than here, but it raised the concern in my mind. I only ask, because I actually just recently started using Reed Solomon on a project and specifically made sure the parity was calculated to include the header data so it could be reconstructed in the event of a lost packet. Does there need to be a third header to build a consensus? Sure this protects against data loss within a chunk, but what if that corruption occurs in the headers? Would two lost bytes (one in the starting header and one in the ending header mean the whole chunk is lost? How robustly does it try to reconstruct the header from the two parts? Duplicacy could try to mix and match the three pieces of the header to satisfy the checksum. Thinking about this more though, I have more questions about the parity being within the chunk. It would increase the amount of space consumed, but that’s what parity does.

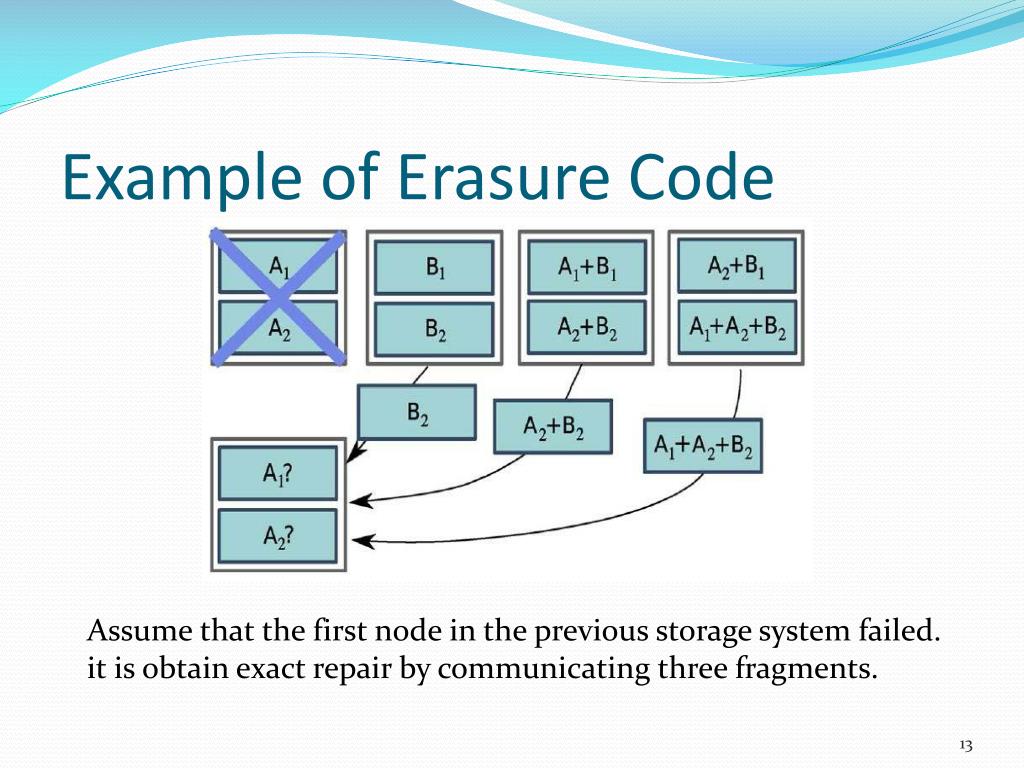

Cross-chunk parity would basically just be more data that hangs around a bit longer before it’s pruned. Yeah, the cross-chunk parity was a concern of mine as well, though it’s not too different from the current case where a chunk can’t be pruned because it contains a few bytes of referenced data. However, I do think Duplicacy needs extra checks to make sure at least the size of each uploaded chunk is correct, and there aren’t situations where we have 0 byte files due to lack of disk space and similar such failures. The regular check command is inexpensive enough that it can detect such situations, and you can fix a storage in good time. If chunks go missing later, that most definitely is an underlying storage/filesystem issue, which I don’t think parity should solve. In fact, with the proper logic, Duplicacy should only upload a snapshot file when all the chunks have been uploaded. I personally don’t think missing chunks should, under normal circumstances, be a common failure mode. I did wonder myself whether this would be a concern, but the implementation complexity of adding cross-chunk parity would be a nightmare to deal with… | chunk size (8 bytes) | #data shards (2 bytes) | #parity shards (2 bytes) | checksum (2 bytes) |Īctually, unless I’m misunderstanding the way you’ve diagrammed things here, it looks like the current scheme also only protects against corruption within a chunk and not for a missing chunk. The encoded chunk file starts with a 10 byte unique banner, then a 14 byte header containing the chunk size and parity parameters, followed by hashes of each shard, then the contents of shards, and finally the 14 byte header again for redundancy:. To check if a storage is configured with erasure coding, run duplicacy -d list and it should report the numbers of data and parity shards: Data shards: 5, parity shards: 2 When a bad chunk is detected, you’ll see log messages like this: Restoring /private/tmp/duplicacy_test/repository to revision 1 Then you can run backup, check, prune, etc as usual.

To initialize a storage with erasure coding enabled, run this command (assuming 5 data shards and 2 parity shards): duplicacy init -erasure-coding 5:2 repository_id storage_url This feature is available since CLI version 2.7.0.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed